Share some MCQs on the topic of "Binary Classification and Multiclass Classification"

Multiple Choice Questions on Binary Classification and Multiclass Classification

Which of the following statements is TRUE about binary classification? a) It can predict more than two possible outcomes for a given instance. b) It is commonly used for tasks like spam detection (spam or not spam). c) It always requires a sigmoid activation function in the output layer. d) It cannot be used with logistic regression models.

In multiclass classification, how many possible classes can the target variable have? a) Exactly two, like "positive" or "negative". b) Any number greater than two. c) It depends on the specific dataset. d) Multiclass classification is not possible with neural networks.

Which of the following is a common loss function used for binary classification problems? a) Mean squared error (MSE) b) Hinge loss (used in Support Vector Machines) c) Cross-entropy (can be used for both binary and multiclass classification) d) Mean absolute error (MAE)

What is a potential challenge of using one-vs-rest multiclass classification (e.g., training separate binary classifiers for each class)? a) It can be computationally expensive for a large number of classes. b) It might not capture relationships between different classes. c) It is not suitable for datasets with imbalanced class distributions. d) It requires a specific activation function in the output layer.

Which of the following classification algorithms can be used for both binary and multiclass classification problems? a) K-nearest neighbors (KNN) b) Naive Bayes c) Logistic regression (can be adapted for multiclass classification with one-vs-rest) d) All of the above

Answer Key:

- b) It is commonly used for tasks like spam detection (spam or not spam).

- b) Any number greater than two.

- c) Cross-entropy (can be used for both binary and multiclass classification)

- b) It might not capture relationships between different classes.

- d) All of the above (KNN, Naive Bayes, and Logistic Regression with one-vs-rest can be used for both binary and multiclass classification)

Can you share the exact form of cross-entropy as used for Binary Classification?

The exact form of cross-entropy used for binary classification is the binary cross-entropy loss (also known as log loss or logistic loss). It measures the difference between the predicted probability (y_hat) from your model and the actual binary label (y) for each data point.

Here's the formula:

Loss = - (y * log(y_hat) + (1 - y) * log(1 - y_hat))Explanation:

Loss: Represents the cross-entropy loss for a single data point.y: The actual binary label (0 or 1).

- 0 typically represents the negative class.

- 1 typically represents the positive class.

y_hat: The predicted probability for the positive class (between 0 and 1).Breakdown:

y * log(y_hat): This term calculates the entropy (uncertainty) associated with the positive class prediction. If the actual labelyis 1 (positive class) and the predicted probabilityy_hatis close to 1 (highly confident positive), then this term becomes close to 0 (low entropy). Conversely, ify_hatis far from 1 (low confidence in positive), the term increases (higher entropy).(1 - y) * log(1 - y_hat): This term calculates the entropy associated with the negative class prediction. If the actual labelyis 0 (negative class) and the predicted probability1 - y_hat(probability of negative class) is close to 1 (highly confident negative), then this term becomes close to 0 (low entropy).Overall Loss:

The negative sign (

-) ensures the loss is minimized when the predicted probabilityy_hataligns with the actual labely. The loss is minimized (approaches 0) when the model correctly predicts the class (either positive or negative with high confidence). Conversely, the loss increases (becomes more positive) when the model makes incorrect predictions.By calculating the average cross-entropy loss across all data points in your training set, you can use it as a cost function to optimize your model during training. The goal is to minimize the overall cross-entropy loss, leading to better alignment between the model's predictions and the true labels.

Can you share the exact form of cross-entropy as used for Multiclass classification?

The exact form of cross-entropy used for multiclass classification is called categorical cross-entropy loss. It extends the concept of binary cross-entropy to handle situations where the target variable can have more than two possible classes. Here's the formula:

Loss = - ∑ (y_i * log(y_hat_i))Explanation:

Loss: Represents the average cross-entropy loss across all data points (samples) in the batch.∑: Represents the summation over all data points (i) in the batch.y_i: The actual class label for data point i (one-hot encoded vector).

- In multiclass classification, the label is typically represented as a one-hot encoded vector with a length equal to the number of classes. The element corresponding to the true class will be 1, and all other elements will be 0.

y_hat_i: The predicted probability vector for data point i.

- This vector also has a length equal to the number of classes, with each element representing the predicted probability of the corresponding class for that data point. The sum of all elements in this vector should be 1 (represents probability distribution across all classes).

Breakdown:

- The summation iterates through all data points in the batch.

- For each data point:

y_i * log(y_hat_i): This term calculates the cross-entropy loss for a single class within the data point. It multiplies the actual label value (y_i) for a specific class (1 for the true class, 0 for others) with the logarithm of the corresponding predicted probability (y_hat_i). This term encourages the model to assign higher probabilities to the true class and lower probabilities to incorrect classes.- By summing these individual class-wise losses across all data points in the batch, we get the average cross-entropy loss for the entire batch.

Minimizing Loss:

Similar to binary cross-entropy, the negative sign (

-) ensures minimizing the loss function leads to better model predictions. When the model correctly assigns high probabilities to the true class for each data point (and low probabilities to incorrect classes), the overall cross-entropy loss is minimized.Softmax Activation:

Categorical cross-entropy loss is typically used in conjunction with the softmax activation function in the output layer of a neural network model for multiclass classification. The softmax function transforms the raw output values from the network into a probability distribution across all classes, ensuring the sum of predicted probabilities for a data point is always 1, making it suitable for calculating the cross-entropy loss effectively.

In essence, categorical cross-entropy loss provides a way to measure the discrepancy between the predicted class probabilities and the actual class labels in multiclass classification, guiding the model towards making more accurate predictions during training.

Can you share some complex scenario based questions on the topic of "Binary and Multiclass Classification"?

Complex Scenario-Based Questions on Binary and Multiclass Classification:

Scenario 1: Imbalanced Classes and Cost-Sensitive Learning

- Context: You're building a fraud detection model for a bank. The dataset has a highly imbalanced class distribution, with a very small number of fraudulent transactions compared to normal transactions.

- Question: How can you address the challenge of imbalanced classes in this scenario? Discuss potential solutions and their trade-offs.

- Answer: Discuss techniques like:

- Oversampling: Over-representing the minority class (fraudulent transactions) by duplicating existing data points or using synthetic data generation techniques. This can improve model performance on the minority class but might lead to overfitting.

- Undersampling: Reducing the majority class (normal transactions) to balance the class distribution. This can improve performance on the minority class but might lead to losing information from the majority class.

- Cost-sensitive learning: Assigning higher weights to misclassifying fraudulent transactions during training. This penalizes the model more for missing fraud cases. Consider the trade-off between overall accuracy and correctly identifying fraudulent transactions.

Scenario 2: Choosing the Right Classifier for Text Classification

- Context: You're developing a sentiment analysis model to classify customer reviews into positive, negative, and neutral categories.

- Question: What factors would you consider when choosing a suitable classification algorithm for this task? How would you compare and contrast the performance of models like Logistic Regression, Naive Bayes, and Support Vector Machines (SVMs) for this specific problem?

- Answer: Discuss factors like:

- Data characteristics: Text data is typically high-dimensional and sparse. Consider models that handle these characteristics well.

- Interpretability: If understanding the rationale behind classifications is important, models like Logistic Regression or Naive Bayes might be preferred over black-box models like SVMs.

- Scalability: If you expect a large volume of reviews, consider the computational efficiency of training and classifying new data points with each model.

- Experiment and compare the performance of these models on a held-out test set using metrics like accuracy, precision, recall, and F1-score for each sentiment class.

Scenario 3: Multiclass vs. Hierarchical Classification for Image Recognition

- Context: You're building an image recognition system to classify different types of clothing (shirts, pants, dresses, etc.).

- Question: Should you use a multiclass classification approach with all clothing types as separate classes, or could a hierarchical classification approach be more efficient? Explain your reasoning.

- Answer: Discuss both approaches:

- Multiclass classification: Simple and straightforward, but the number of classes can grow large, leading to increased training complexity.

- Hierarchical classification: Organizes classes into a tree-like structure (e.g., tops vs. bottoms, then further classifying tops into shirts, jackets, etc.). This can be more efficient for a large number of related classes and might capture inherent relationships between clothing types. Consider the trade-off between simplicity and potential performance gains with hierarchical classification.

Scenario 4: Dealing with Class Imbalance and Multiclass Classification

- Context: You're building a medical diagnosis system to classify different types of diseases based on patient data. The dataset has a class imbalance, with some diseases being much rarer than others.

- Question: How can you address the challenges of both imbalanced classes and multiclass classification in this scenario? Discuss potential strategies.

- Answer: Combine techniques for imbalanced classes (oversampling, undersampling, or cost-sensitive learning) with multiclass classification approaches (e.g., one-vs-rest strategy or hierarchical classification if suitable). Evaluate different strategies on a held-out test set to find the best combination for this specific problem.

Scenario 5: Ensemble Methods for Robust Classification

- Context: You've built a binary classification model to predict customer churn for a telecommunications company. However, the model's performance is not optimal, and you'd like to improve its accuracy.

- Question: How can you leverage ensemble methods to potentially improve the performance and robustness of your churn prediction model? Discuss specific ensemble techniques that could be beneficial.

- Answer: Discuss ensemble methods like:

- Random Forest: Trains multiple decision trees on subsets of the data and aggregates their predictions. This can reduce variance and improve generalization compared to a single model.

- Boosting (e.g., AdaBoost): Sequentially trains models, focusing on data points that the previous model struggled with. This can lead to strong learners by combining weaker models.

- Consider experimenting with different ensemble methods to see if they outperform your initial model for customer churn prediction.

By preparing for these complex scenarios, you can demonstrate your ability to think critically about classification problems beyond just choosing a model and applying it. You'll showcase your understanding of the impact of factors like imbalanced classes, data characteristics, and the choice of classification algorithm on model performance. Additionally, you'll highlight your knowledge of advanced techniques like cost-sensitive learning and ensemble methods that can be used to address challenges and improve classification accuracy in real-world applications. Remember to emphasize your ability to experiment, evaluate different approaches, and select the most suitable solution for the specific problem at hand.

Solve this: Scenario 2: Choosing the Right Classifier for Text Classification Context: You're developing a sentiment analysis model to classify customer reviews into positive, negative, and neutral categories. Question: What factors would you consider when choosing a suitable classification algorithm for this task? How would you compare and contrast the performance of models like Logistic Regression, Naive Bayes, and Support Vector Machines (SVMs) for this specific problem?

Here's a breakdown of factors to consider and a comparison of Logistic Regression, Naive Bayes, and SVMs for sentiment analysis:

Factors to Consider:

Data Characteristics:

- Text data is high-dimensional: Reviews can contain a large vocabulary of words.

- Text data is sparse: Most words won't appear in every review.

Interpretability:

- Understanding why a review is classified as a certain sentiment might be valuable.

Scalability:

- The system might need to handle a large volume of reviews in real-time.

Performance:

- Accuracy, precision, recall, and F1-score are crucial metrics for sentiment analysis.

Model Comparison:

Logistic Regression:

- Pros: Simple, interpretable (coefficients show word importance), efficient for large datasets.

- Cons: Might not capture complex relationships between words in sentiment. Might require feature engineering (e.g., word embeddings) to improve performance on high-dimensional text data.

Naive Bayes:

- Pros: Efficient, handles sparse data well (due to its independence assumption), interpretable (class probabilities for each word).

- Cons: Naive independence assumption between words might not hold true for sentiment analysis. Might underperform compared to more complex models on complex datasets.

Support Vector Machines (SVMs):

- Pros: Powerful, can learn complex non-linear relationships between words, potentially high accuracy with good kernel selection (e.g., linear kernel for sentiment analysis).

- Cons: Less interpretable (black-box model), can be computationally expensive to train for large datasets, requires careful parameter tuning (kernel selection, regularization) to avoid overfitting.

Summary:

- Logistic Regression is a good starting point due to its simplicity, interpretability, and scalability. However, it might require feature engineering for optimal performance.

- Naive Bayes is efficient and handles sparse data well, but its independence assumption might limit its effectiveness in capturing complex sentiment patterns.

- SVMs can achieve high accuracy but require more computational resources and careful hyperparameter tuning. They offer less interpretability compared to Logistic Regression or Naive Bayes.

Recommendation:

Start with Logistic Regression or Naive Bayes due to their interpretability and efficiency. If performance isn't satisfactory, consider SVMs with careful hyperparameter tuning or explore more advanced models like Recurrent Neural Networks (RNNs) that can capture sequential information in text data, potentially leading to better sentiment analysis.

Additional Considerations:

- Evaluate all models on a held-out test set using relevant metrics like accuracy, precision, recall, and F1-score to determine the best performing model for your specific sentiment analysis task.

- Consider the trade-off between interpretability and performance. If understanding why a review is classified as a certain sentiment is crucial, Logistic Regression or Naive Bayes might be preferred despite potentially lower accuracy compared to a complex model like an SVM.

Remember, the best model choice depends on your specific data, performance requirements, and interpretability needs.

Can you explain Multiclass vs. Hierarchical Classification approach for Master Data Management project?

Multiclass vs. Hierarchical Classification for Master Data Management (MDM)

In Master Data Management (MDM), you deal with classifying entities (products, customers, locations, etc.) to ensure consistency and accuracy across different systems within an organization. Choosing between a multiclass and hierarchical classification approach depends on the characteristics of your master data and the desired level of granularity in your classifications.

Multiclass Classification:

- Concept: Each entity belongs to exactly one of a predefined set of mutually exclusive classes.

- Example: Classifying products into categories like "electronics," "clothing," "furniture," etc. Each product belongs to only one category.

Advantages:

- Simple to implement and understand.

- Efficient for classification tasks with a limited number of well-defined categories.

Disadvantages:

- Might not be suitable for complex taxonomies with many subcategories.

- Adding new categories requires modifying the entire classification scheme.

- Might not capture inherent relationships between classes.

Hierarchical Classification:

- Concept: Entities are classified into a tree-like structure where broader categories are further divided into subcategories. An entity can belong to multiple classes at different levels of the hierarchy.

- Example: Classifying products into a hierarchy like "Electronics > TVs > LED TVs," "Electronics > TVs > OLED TVs," "Furniture > Bedroom Furniture > Beds," etc.

Advantages:

- More flexible and scalable for complex taxonomies with many subcategories.

- Easier to add new categories by placing them within the existing hierarchy.

- Captures inherent relationships between classes (e.g., all LED TVs are also TVs and Electronics).

Disadvantages:

- Can be more complex to implement and maintain compared to multiclass.

- Requires careful design of the hierarchical structure to avoid ambiguity.

Choosing the Right Approach for MDM:

- Use multiclass classification if:

- You have a limited number of well-defined categories.

- The relationships between categories are not important.

- Simplicity and ease of implementation are priorities.

- Use hierarchical classification if:

- You have a complex taxonomy with many subcategories.

- You anticipate adding new categories in the future.

- Capturing relationships between categories is valuable for your data management processes.

Additional Considerations:

- Hybrid Approach: You can even combine both approaches. For example, you might have a top-level multiclass classification (e.g., "Products," "Customers," "Locations") and then use hierarchical classification within each category to further classify entities.

- Data Analysis: Analyze your existing master data to understand the distribution of entities across categories and subcategories. This can help you determine the level of granularity and hierarchy needed for effective data management.

By carefully considering the characteristics of your master data and the desired level of detail in your classifications, you can choose the most suitable approach (multiclass, hierarchical, or hybrid) to ensure accurate and consistent data across your MDM system.

Pages

- Index of Lessons in Technology

- Index of Book Summaries

- Index of Book Lists And Downloads

- Index For Job Interviews Preparation

- Index of "Algorithms: Design and Analysis"

- Python Course (Index)

- Data Analytics Course (Index)

- Index of Machine Learning

- Postings Index

- Index of BITS WILP Exam Papers and Content

- Lessons in Investing

- Index of Math Lessons

- Downloads

- Index of Management Lessons

- Book Requests

- Index of English Lessons

- Index of Medicines

- Index of Quizzes (Educational)

Sunday, May 5, 2024

Day 6: MCQs and Scenario Based Complex Questions on The Topic of "Binary and Multiclass Classification"

Wednesday, May 1, 2024

Day 5: Complex Scenario Based Questions For Anomaly Detection Project (May 2024)

Please share some complex scenario based questions for this use case from my resume for the interview for the position of data scientist: Anomaly Detection in ‘Amex Loyalty Platform’ Detection of anomalies in transaction data coming for electronic payment card holders. We identify if we are having spikes or drops in rewards, transaction amount or number of transactions for a product or merchant or business line. Data pre-processing: (I) Using Pandas, and (II) PySpark Models: (I) Auto-encoders based using TensorFlow, (II) k-Means based using PySpark, (III) Isolation Forest based using Scikit Learn. Deployment: On Hive based cluster using Python UDFs as the database is Hive.

Complex Scenario-Based Questions for Anomaly Detection in Amex Loyalty Platform (for Data Scientist Interview)

Here are some complex scenario-based questions related to your anomaly detection experience in the Amex Loyalty Platform that you can anticipate during your data scientist interview:

Scenario 1: Model Explainability and Feature Engineering

- Question: You've implemented anomaly detection models for Amex rewards, transaction amounts, and number of transactions. How would you explain an anomaly flagged by an autoencoder model to a business stakeholder who might not have a technical background? How can feature engineering further enhance anomaly detection in this use case?

- Answer: Explain the anomaly in simpler terms, like "a significant deviation from the typical reward pattern for this product category." Utilize visualizations like bar charts or time series plots to show the anomaly compared to the historical trend. Feature engineering could involve creating new features like ratios (reward per transaction), categorizing transactions by time of day or day of week, or calculating rolling averages to capture trends.

Scenario 2: Handling Imbalanced Data and Class Imbalance

- Question: Imagine your anomaly detection model for transaction amounts is flagging a very small number of anomalies compared to the vast majority of normal transactions. This class imbalance can affect model performance. How would you address this challenge?

- Answer: Acknowledge the class imbalance and discuss potential solutions. Techniques like SMOTE (Synthetic Minority Oversampling Technique) can be used to oversample the anomaly class in the training data. Alternatively, consider cost-sensitive learning where the model assigns higher weights to misclassifying anomalies (minority class) during training.

Scenario 3: Model Selection and Trade-offs

- Question: You've implemented three different anomaly detection models (autoencoders, k-Means, Isolation Forest). How would you decide which model is best suited for a specific scenario like detecting anomalies in the number of transactions for a new merchant category?

- Answer: Discuss the strengths and weaknesses of each model. Autoencoders might be suitable for capturing complex patterns, but interpretability might be lower. K-Means excels at identifying clusters but struggles with non-spherical data distributions. Isolation Forest is robust to outliers but might not capture specific patterns well. Evaluate each model's performance on a held-out test set and consider factors like interpretability (if crucial), computational efficiency, and the specific characteristics of the anomaly you're targeting (e.g., point anomalies vs. contextual anomalies).

Scenario 4: Anomaly Validation and Alerting Strategy

- Question: How would you validate the anomalies flagged by your models to ensure they're not false positives? How would you design an alerting strategy for these anomalies?

- Answer: Discuss techniques for anomaly validation. You could involve domain experts to review flagged transactions and confirm if they're genuine anomalies. Implement an alerting strategy with different thresholds or severity levels based on the type and potential impact of the anomaly. Consider integrating anomaly alerts with existing fraud detection or risk management workflows for further investigation and potential action.

Scenario 5: Scalability and Performance Considerations

- Question: Your anomaly detection system is deployed on a Hive-based cluster using Python UDFs. How would you ensure the system scales efficiently as the transaction volume increases? How would you monitor the performance of your anomaly detection models?

- Answer: Discuss strategies for scalability. You could explore Apache Spark for distributed processing of large datasets. Consider using optimized libraries for anomaly detection algorithms. Monitor model performance metrics like precision, recall, and F1-score to track the effectiveness of anomaly detection over time. Additionally, monitor false positive rates to avoid overwhelming analysts with irrelevant alerts.

By preparing for these complex scenarios and demonstrating your thought process for addressing them, you can showcase your expertise in anomaly detection and impress potential employers during your data scientist interview. Remember to highlight your ability to not only implement models but also understand their limitations, consider business context, and design robust anomaly detection systems for real-world applications.

Tags: Interview Preparation,Technology,Machine Learning,Tuesday, April 30, 2024

MCQs and Complex Scenario Based Questions on Decision Tree Regressor

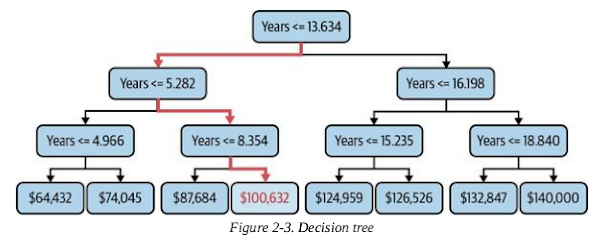

Decision Tree to Model: Years-of-Experience vs. Salary The prediction surface from a DecisionTreeRegressor:Share some MCQs on DecisionTreeRegressor

Multiple Choice Questions on DecisionTreeRegressor

Here are some multiple choice questions (MCQs) to test your understanding of DecisionTreeRegressor in scikit-learn:

Which of the following is NOT a splitting criterion used by DecisionTreeRegressor? a) Mean squared error (MSE) b) Information gain c) Gini impurity d) Root mean squared error (RMSE)

What parameter in DecisionTreeRegressor controls the maximum depth of the tree? a) min_samples_split b) max_depth c) min_samples_leaf d) criterion

What is the purpose of the

min_samples_splitparameter in DecisionTreeRegressor? a) It determines the minimum number of samples required to split a node. b) It specifies the minimum number of samples required at a leaf node. c) It sets the maximum depth of the tree. d) It defines the splitting criterion used for decision making.What is the advantage of using DecisionTreeRegressor compared to linear regression models for certain tasks? a) Decision trees can capture non-linear relationships between features and the target variable. b) Decision trees are always more accurate than linear regression models. c) Decision trees require less data to train compared to linear regression models. d) Decision trees are inherently more interpretable than linear regression models.

What is a potential drawback of decision trees? a) Decision trees are not suitable for handling categorical features. b) Decision trees can suffer from overfitting, especially with high-dimensional data. c) Decision trees are computationally expensive to train compared to linear regression models. d) Decision trees cannot be used for regression tasks.

Answer Key:

- d) Root mean squared error (RMSE) (RMSE is a metric, not a splitting criterion)

- b) max_depth

- a) It determines the minimum number of samples required to split a node.

- a) Decision trees can capture non-linear relationships between features and the target variable.

- b) Decision trees can suffer from overfitting, especially with high-dimensional data.

What is the advantage of setting "min_samples_split"?

The main advantage of setting

min_samples_splitin a DecisionTreeRegressor is to prevent overfitting and improve the model's generalization ability. Here's how it works:

- Decision Trees and Overfitting: Decision trees can potentially overfit the training data, especially with high-dimensional datasets. This means the tree becomes too specific to the training data and performs poorly on unseen data.

- Role of

min_samples_split: This parameter sets a minimum number of samples required to split a node (decision point) in the tree. By requiring a minimum number of samples at each split, you avoid creating overly specific branches based on noise or small fluctuations in the data. This helps to:- Reduce Variance: By ensuring a minimum number of samples at each split, the tree is less likely to make decisions based on random fluctuations in the training data. This leads to a more stable and generalizable model.

- Prevent Leaf Nodes with Few Samples: With

min_samples_split, you prevent creating leaf nodes (terminal nodes) with very few data points. These leaf nodes might not represent the broader trends in the data and can lead to poor predictions for unseen data.

In essence, setting min_samples_split helps the decision tree focus on learning robust patterns from the data instead of memorizing specific details that might not generalize well to unseen examples.

Here are some additional points to consider:

- The optimal value for

min_samples_splitdepends on the size and complexity of your dataset. Experiment with different values and evaluate the model's performance on a held-out test set to find the best setting. - There's a trade-off between preventing overfitting and reducing training efficiency. A very high

min_samples_splitcould lead to underfitting (failing to capture important patterns), while a very low value might not provide enough protection against overfitting.

What other parameters are there for tuning a DecisionTreeRegressor?

DecisionTreeRegressor in scikit-learn offers several parameters for tuning the model's behavior and performance. Here are some key parameters beyond min_samples_split that you can adjust to optimize your decision tree:

Stopping Criteria:

max_depth: This parameter controls the maximum depth of the tree. A deeper tree can capture more complex relationships but is also more prone to overfitting. Experiment with different values to find the sweet spot between complexity and generalization.min_samples_leaf: This sets the minimum number of samples required at a leaf node. Similar tomin_samples_split, it helps prevent overfitting by avoiding overly specific leaf nodes based on few data points.

Splitting Criteria:

criterion: This defines the function used to measure the quality of a split at each node. Common options include "gini" (Gini impurity) for classification and "mse" (mean squared error) for regression tasks. You can experiment with different criteria to see which one improves performance for your specific problem.

Regularization:

max_features: This parameter controls the number of features considered at each split. A lower value can help reduce overfitting by preventing the tree from focusing on irrelevant features. However, setting it too low might prevent the tree from capturing important relationships.

Other Parameters:

random_state: Setting a random seed forrandom_stateensures reproducibility when training the decision tree multiple times with different hyperparameter configurations.

Tuning Techniques:

Once you've identified relevant parameters, consider techniques like grid search or randomized search to efficiently explore different parameter combinations and find the optimal configuration for your dataset.

By understanding and adjusting these parameters, you can effectively tune your DecisionTreeRegressor to achieve better performance and prevent overfitting. Remember to evaluate the model's performance on a held-out test set to avoid overfitting to the training data.

Share some scenario based questions for DecisionTreeRegressor.

Scenario-Based Questions for DecisionTreeRegressor:

Here are some questions that explore using DecisionTreeRegressor in various scenarios:

-

Customer Churn Prediction: You're building a model to predict customer churn for a telecommunications company. The features include tenure with the company, monthly call volume, service plan type, and customer satisfaction score. How can you use DecisionTreeRegressor to identify the key factors influencing customer churn?

- Solution:

- Train a DecisionTreeRegressor with the target variable being a binary indicator of churn (0 for not churned, 1 for churned).

- Analyze the feature importance scores provided by the model to understand which features (tenure, call volume, etc.) have the most significant influence on churn predictions.

- Visualize the decision tree to gain insights into the decision-making process for churn prediction.

- Solution:

-

Housing Price Estimation: You're developing a model to estimate housing prices based on features like square footage, number of bedrooms, location, and year built. How can you handle potential non-linear relationships between features and price using DecisionTreeRegressor?

- Solution:

- Decision trees can inherently capture non-linear relationships by splitting the data based on feature values. Train the DecisionTreeRegressor to predict the continuous target variable (housing price).

- Evaluate the model's performance on a held-out test set using metrics like mean squared error or R-squared.

- Consider comparing the performance of DecisionTreeRegressor with other models like Random Forest or Support Vector Machines with suitable kernels, which might be better suited for non-linear problems with larger datasets.

- Solution:

-

Anomaly Detection in Sensor Data: You're analyzing sensor data from a factory to detect anomalous readings that might indicate equipment malfunctions. The sensor readings are continuous numerical values. How can you use DecisionTreeRegressor for anomaly detection?

- Solution:

- Train a DecisionTreeRegressor to predict the typical sensor readings based on historical data.

- Identify data points that fall outside the decision tree's predictions (deviations from the learned patterns) as potential anomalies.

- Fine-tune the model parameters (

min_samples_split,max_depth) to balance capturing normal variations and identifying true anomalies.

- Solution:

Remember, these are just a few examples. DecisionTreeRegressor can be applied to various scenarios where understanding the decision-making process and capturing non-linear relationships add value to the modeling task.

Can you show it through code the use case of Anamoly Detection in Sensor Data using DecisionTreeRegressor?

Certainly, here's Python code demonstrating anomaly detection in sensor data using DecisionTreeRegressor:

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.tree import DecisionTreeRegressor

# Load sensor data (replace with your actual data)

data = pd.read_csv("sensor_data.csv")

sensor_readings = data["sensor_reading"] # Feature (sensor readings)

# Split data into training and testing sets

X_train, X_test, _, _ = train_test_split(sensor_readings, sensor_readings, test_size=0.2, random_state=42)

# Train DecisionTreeRegressor to predict typical sensor readings

model = DecisionTreeRegressor()

model.fit(X_train.values.reshape(-1, 1), X_train) # Reshape for single feature

# Predict sensor readings on the test set

predicted_readings = model.predict(X_test.values.reshape(-1, 1))

# Define threshold for anomaly detection (adjust based on data)

threshold = 0.1 # Example threshold, can be standard deviation or a percentile

# Detect anomalies based on prediction errors

anomalies = []

for i, (reading, prediction) in enumerate(zip(X_test, predicted_readings)):

error = abs(reading - prediction)

if error > threshold:

anomalies.append(i)

# Print or process anomaly indices

print(f"Anomaly indices in test set: {anomalies}")

# You can further analyze the sensor data points at these anomaly indices

Explanation:

- Import Libraries: We import

pandasfor data manipulation,train_test_splitfromsklearn.model_selectionto split the data, andDecisionTreeRegressorfromsklearn.tree. - Load Data: Replace

"sensor_data.csv"with your actual CSV containing a column named"sensor_reading". - Split Data: Split the data into training and testing sets using

train_test_split. The test set will be used for anomaly detection. - Train Model: Train the DecisionTreeRegressor model using

model.fit(). Reshape the training data (X_train) to a 2D array for compatibility with the model (even though it's a single feature). - Predict Sensor Readings: Predict sensor readings on the test set using

model.predict(). - Define Anomaly Threshold: Set a threshold for anomaly detection. This could be a fixed value (like

0.1here), a standard deviation from the predicted value, or a specific percentile. - Detect Anomalies: Iterate through the test set and predicted readings. Calculate the absolute error (difference) between the actual and predicted values. If the error exceeds the threshold, consider the data point an anomaly and append its index to the

anomalieslist. - Process Anomalies: The code prints the anomaly indices in the test set. You can use these indices to further analyze the corresponding sensor data points to investigate potential equipment malfunctions or unusual readings.

Note:

- Adjust the anomaly threshold based on the characteristics of your sensor data and the desired sensitivity for anomaly detection.

- This is a basic example. Consider techniques like cross-validation or hyperparameter tuning for a more robust model. You might also explore other anomaly detection algorithms depending on your specific needs.

MCQs and Complex Scenario Based Questions on Logistic Regression Classifier

Please share 10 MCQs for Logistic Regression.

-

Logistic regression is a type of:

a) Unsupervised learning algorithm

b) Supervised learning algorithm for classification

c) Dimensionality reduction technique

d) Clustering algorithm

-

The output of a logistic regression model is a:

a) Continuous value between 0 and 1

b) Probability between 0 and 1

c) Discrete class label (e.g., 0 or 1)

d) None of the above

-

Which of the following assumptions does logistic regression make about the independent variables?

a) Must be normally distributed

b) Must have a linear relationship with the logit of the dependent variable

c) Must be categorical

d) None of the above

-

What is the most common cost function used in logistic regression?

a) Mean squared error

b) Logistic loss (Log loss)

c) Mean absolute error

d) Hinge loss

-

How can you handle categorical independent variables in logistic regression?

a) Ignore them

b) One-hot encode them

c) Use them directly as text

d) Convert them to ordinal variables

-

What is the difference between logistic regression and linear regression?

a) Logistic regression uses linear regression for prediction and then applies a sigmoid function to get probabilities.

b) Linear regression predicts continuous values, while logistic regression predicts probabilities.

c) Logistic regression uses a sigmoid function instead of a linear activation function.

d) All of the above

-

What is regularization used for in logistic regression?

a) To improve interpretability of the model

b) To prevent overfitting

c) Both a and b

d) None of the above

-

How can you evaluate the performance of a logistic regression model?

a) By looking at the accuracy

b) By using classification metrics like precision, recall, F1-score, and AUC-ROC curve

c) Both a and b

d) None of the above

-

What is the advantage of logistic regression compared to more complex models like decision trees?

a) It is generally easier to interpret

b) It can be faster to train and predict

c) Both a and b

d) None of the above

-

What is a limitation of logistic regression?

a) It can only handle two classes

b) It assumes a linear relationship between the independent variables and the logit of the dependent variable

c) Both a and b

d) None of the above

Share some complex scenario based questions for Logistic Regression.

Here are some complex scenario-based questions for Logistic Regression:

-

Imbalanced Class Distribution: You're building a logistic regression model to predict loan defaults. The historical data shows a significant imbalance, with a much larger number of successful repayments compared to defaults. How would you approach this scenario to ensure the model performs well for both classes (successful repayments and defaults)?

-

Feature Selection and Model Interpretability: You've trained a logistic regression model to predict customer churn for a telecommunications company. The model achieves good accuracy, but it includes a large number of features, making it difficult to understand which factors most contribute to customer churn. How would you balance model interpretability with maintaining good predictive performance?

-

Collinearity and Regularization: You're developing a logistic regression model to predict credit card fraud. Some of the features you're using might be highly correlated (e.g., transaction amount and purchase location). How can you address collinearity and its potential impact on the model's performance? Discuss the role of regularization in this scenario.

-

Non-linear Relationships and Model Selection: You're building a logistic regression model to predict housing prices based on features like square footage and number of bedrooms. However, you suspect that the relationship between these features and price might not be perfectly linear. How would you approach this scenario? Discuss the limitations of logistic regression and potential alternative modeling techniques that could capture non-linear relationships.

-

Concept Drift and Model Monitoring: You've trained a logistic regression model to predict spam emails. However, email spam tactics evolve over time. How would you monitor your logistic regression model's performance over time and identify potential concept drift (changes in the underlying data distribution)? Discuss strategies for adapting the model when concept drift is detected.

These scenarios require you to think critically about the strengths and limitations of logistic regression while considering real-world complexities like imbalanced data, collinearity, non-linear relationships, and data evolution.

Solve this:

Imbalanced Class Distribution: You're building a logistic regression model to predict loan defaults. The historical data shows a significant imbalance, with a much larger number of successful repayments compared to defaults. How would you approach this scenario to ensure the model performs well for both classes (successful repayments and defaults)?

Here's how you can approach building a logistic regression model for loan default prediction with an imbalanced class distribution (many successful repayments, few defaults):

Techniques to Address Imbalance:

-

Cost-Sensitive Learning: Modify the logistic regression cost function to assign higher weights to misclassifying loan defaults (minority class). This emphasizes the importance of correctly identifying defaults during training.

-

Class Resampling: Utilize techniques like SMOTE (Synthetic Minority Oversampling Technique) to oversample the minority class (defaults). SMOTE creates synthetic data points based on existing default examples, balancing the class distribution in the training set.

-

Under-sampling Majority Class (Consider with Caution): As a last resort, consider under-sampling the majority class (successful repayments) to match the size of the minority class. However, this approach discards potentially valuable data and might reduce model performance overall. Use it cautiously and evaluate its impact carefully.

Evaluation Metrics:

- Accuracy alone might be misleading due to the imbalance. Focus on metrics that consider both classes:

- F1-score: Balances precision (correctly identified defaults) and recall (proportion of actual defaults identified).

- ROC-AUC curve: Analyzes the model's ability to distinguish between defaults and non-defaults across different classification thresholds.

Additional Considerations:

-

Feature Engineering: Analyze the loan data and identify features most predictive of defaults. This can improve model performance, especially for the minority class.

-

Regularization: Techniques like L1 or L2 regularization can help prevent overfitting, which is particularly important with imbalanced data.

-

Model Selection: Consider alternative models that might handle imbalanced data better, such as decision trees with cost-sensitive splitting or random forests. Compare their performance with logistic regression.

Monitoring and Refinement:

- Monitor the model's performance on the minority class over time. As new loan data becomes available, re-evaluate the class imbalance and potentially retrain the model with updated data and resampling techniques.

By implementing these strategies, you can mitigate the impact of imbalanced data and build a logistic regression model that performs well for both successful repayments and loan defaults. Remember to choose the most suitable techniques based on your specific data and evaluation criteria.

Can show some code example to implement: Cost Sensitive Learning for Logistic Regression

Here's a Python code example using scikit-learn to implement cost-sensitive learning for logistic regression:

Pythonfrom sklearn.linear_model import LogisticRegression

from sklearn.utils.class_weight import compute_class_weight

# Sample data (assuming features in X and target variable in y)

X = ...

y = ...

# Define class weights

class_weights = compute_class_weight('balanced', classes=np.unique(y), y=y)

# Create cost-sensitive logistic regression model

cost_sensitive_model = LogisticRegression(class_weight=class_weights, solver='liblinear') # Adjust solver as needed

# Train the model

cost_sensitive_model.fit(X, y)

# Make predictions

predictions = cost_sensitive_model.predict(X_new) # X_new is unseen data

Use code with caution. Explanation:

- Import libraries: We import

LogisticRegression from sklearn.linear_model and compute_class_weight from sklearn.utils.class_weight.

- Sample Data: Replace

X and y with your actual features and target variable for loan defaults (1 for default, 0 for successful repayment).

- Define Class Weights:

compute_class_weight calculates weights based on the chosen strategy ('balanced' in this case).- Classes are set to the unique values in

y (typically 0 and 1 for loan defaults).

y is the target variable used to calculate weights based on class frequencies. The 'balanced' strategy assigns higher weights to the minority class.

- Create Cost-Sensitive Model:

- We instantiate the

LogisticRegression model.

class_weight is set to the calculated weights to emphasize correct classification of the minority class (loan defaults).solver can be adjusted based on your preference (e.g., 'liblinear' for smaller datasets).

- Train the Model: Train the model using

cost_sensitive_model.fit(X, y).

- Make Predictions: Use the trained model to predict loan defaults for new data points in

X_new.

Note:

- This example uses 'balanced' class weights. You can explore other strategies like 'inverse_freq' in the

compute_class_weight function depending on your specific needs.

- Adjust the

solver parameter based on your dataset size and computational resources. Liblinear is generally faster for smaller datasets.

This code provides a basic example of cost-sensitive logistic regression. Remember to adapt it to your specific data and evaluation criteria.

For handling imbalanced classes, you have decided to use SMOTE. In what phase of the Machine Learning project do you apply it? Before test train split or after test train split?

You should apply SMOTE after splitting your data into training and test sets. Here's why:

-

Data Leakage: Applying SMOTE before the split leaks information about the test set into the training set. Since SMOTE generates synthetic data based on existing data points, using it before the split could introduce information from the test set (which the model shouldn't see during training) into the training data. This can lead to overfitting and unreliable performance evaluation.

-

Separate Evaluations: The test set is used to evaluate the model's performance on unseen data. By applying SMOTE only to the training data, you ensure a fair evaluation where the model is tested on its ability to generalize to new, unseen examples (including potential imbalanced distributions) that it hasn't encountered during training.

The Ideal Workflow:

- Split your data into training and test sets.

- Apply SMOTE (or any other resampling technique) to the training set only to address the class imbalance.

- Train your model on the augmented training set.

- Evaluate the model's performance on the untouched test set.

This approach ensures that the model is trained on a balanced representation of the classes while the test set remains unchanged, providing a more reliable assessment of the model's generalization ability in the presence of imbalanced data.

Solve this:

Feature Selection and Model Interpretability: You've trained a logistic regression model to predict customer churn for a telecommunications company. The model achieves good accuracy, but it includes a large number of features, making it difficult to understand which factors most contribute to customer churn. How would you balance model interpretability with maintaining good predictive performance?

Balancing model interpretability with good predictive performance in logistic regression for customer churn prediction requires a thoughtful approach. Here's how you can navigate this scenario:

Feature Selection Techniques:

-

Filter Methods: Utilize techniques like chi-square tests or information gain to identify features that have a statistically significant correlation with customer churn. These methods are efficient and provide some interpretability by highlighting features with strong relationships to the target variable.

-

Wrapper Methods: Employ techniques like recursive feature elimination (RFE) that iteratively remove features and retrain the model, evaluating the impact on performance. Choose the feature set that achieves a good balance between accuracy and the number of features retained.

-

Embedded Methods: Explore methods like L1-regularized logistic regression. L1 regularization penalizes the coefficients of features during training, potentially driving some coefficients to zero. Features with zero coefficients can be considered less important for prediction, offering interpretability alongside model selection.

Maintaining Predictive Performance:

- Feature Importance Scores: After feature selection, analyze the importance scores assigned by techniques like RFE or feature permutation to understand which features contribute most to the model's predictions.

- Model Comparison: Compare the performance of the model with the reduced feature set to the original model. If the performance drop is minimal, the chosen features likely capture the essential information for churn prediction.

- Domain Knowledge: Consider your understanding of the telecommunications industry and customer behavior. Prioritize features that align with your domain expertise and are intuitively linked to churn. Discard features that seem irrelevant or redundant based on your knowledge.

Additional Techniques for Interpretability:

- Coefficient Analysis: Analyze the coefficients of the logistic regression model. Features with larger positive coefficients are associated with a higher likelihood of churn, while those with negative coefficients might indicate factors that deter churn. This analysis provides interpretability but requires caution as coefficients might not always have a straightforward causal relationship with churn.

- Partial Dependence Plots (PDPs): Visualize the effect of individual features on the predicted probability of churn. PDPs can reveal non-linear relationships and help understand how specific features influence churn predictions.

By combining feature selection techniques with interpretability analysis, you can achieve a balance between model complexity and understanding the key factors driving customer churn. Remember, the chosen features should provide both good predictive power and align with your knowledge of the customer churn domain. Continuously evaluate the trade-off between interpretability and performance based on your specific requirements and priorities.

Can you share code example to do Coefficient Analysis?

Certainly! Here's a Python code example using scikit-learn to perform coefficient analysis for a trained logistic regression model:

Pythonimport pandas as pd

from sklearn.linear_model import LogisticRegression

# Load your data (replace with your actual data)

data = pd.read_csv("churn_data.csv")

X = data.drop("churn", axis=1) # Features

y = data["churn"] # Target variable (churn)

# Train the logistic regression model (assuming you already have a trained model)

model = LogisticRegression() # Replace with your trained model

model.fit(X, y)

# Get coefficients

coefficients = model.coef_[0] # Access coefficients from the first element (assuming single class)

# Get feature names

feature_names = X.columns

# Print results

print("Feature \t Coefficient")

for feature, coef in zip(feature_names, coefficients):

print(f"{feature} \t {coef:.4f}")

Use code with caution. Explanation:

- Import libraries: We import

pandas for data manipulation and LogisticRegression from sklearn.linear_model.

- Load Data: Replace

"churn_data.csv" with your actual data path. The code separates features (X) and the target variable (y).

- Train Model (Placeholder): We assume you have a trained model. Replace

model.fit(X, y) with your model training code.

- Get Coefficients: Access the model's coefficients using

model.coef_. The first element ([0]) retrieves coefficients for the first binary class (assuming binary classification for churn prediction).

- Get Feature Names: Extract feature names from the DataFrame columns (

X.columns).

- Print Results: Iterate through features and coefficients, printing them in a clear format.

This code snippet provides a basic example of coefficient analysis for logistic regression. The coefficients indicate the relative influence of each feature on the predicted probability of churn. However, remember that coefficients don't always represent direct causal relationships.

Solve this:

Collinearity and Regularization: You're developing a logistic regression model to predict credit card fraud. Some of the features you're using might be highly correlated (e.g., transaction amount and purchase location). How can you address collinearity and its potential impact on the model's performance? Discuss the role of regularization in this scenario.

Addressing Collinearity and Regularization in Logistic Regression for Credit Card Fraud Prediction

Collinearity, where features in your credit card fraud prediction model are highly correlated, can negatively impact your logistic regression model's performance. Here's how to address it and the role of regularization:

Identifying Collinearity:

- Correlation Matrix: Calculate the correlation matrix between features. Features with correlation coefficients close to 1 or -1 are likely highly correlated.

- Visualization Techniques: Utilize techniques like heatmaps or scatter plots to visualize correlations between features and identify potential collinearity visually.

Addressing Collinearity:

- Feature Selection: Employ techniques like feature importance scores, wrapper methods (e.g., RFE), or embedded methods (L1 regularization) to identify and remove redundant features while maintaining good predictive power. Aim to retain the most informative and non-collinear features.

- Dimensionality Reduction: Explore techniques like Principal Component Analysis (PCA) to create new, uncorrelated features (principal components) based on the original features. This can reduce dimensionality and address collinearity while preserving most of the information.

Regularization and Collinearity:

Regularization techniques penalize the coefficients of features during training, effectively reducing their influence on the model. This can help mitigate the impact of collinearity in several ways:

- Reduced Variance: Regularization shrinks the magnitude of coefficients, especially for highly correlated features. This helps stabilize the model's estimates and reduces the variance of predictions, making them less sensitive to small changes in the data.

- Improved Generalizability: By preventing large coefficients for any single feature, regularization encourages the model to rely on a combination of features for prediction. This can lead to a more robust model that generalizes better to unseen data.

L1 vs. L2 Regularization for Collinearity:

Logistic regression commonly uses L1 (LASSO) or L2 (Ridge) regularization:

-

L1 Regularization:

- Sets some coefficients to zero, effectively removing highly correlated features from the model altogether.

- This leads to sparser models with fewer features, making them potentially more interpretable and less prone to overfitting due to collinearity.

-

L2 Regularization:

- Shrinks the coefficients of all features towards zero, but not necessarily to zero.

- This approach still utilizes all features but reduces their influence if they are highly correlated. It might be less interpretable but can sometimes lead to better predictive performance compared to L1 in certain scenarios.

Choosing the Right Regularization:

The optimal choice between L1 and L2 regularization depends on factors like your data and desired model characteristics:

- If interpretability is a priority, and you want to identify and potentially remove redundant features, L1 might be a good choice.

- If generalizability and avoiding overfitting due to collinearity are crucial, L2 could be a better option.

Experimentation with both L1 and L2 using different regularization hyperparameter values is recommended to find the approach that yields the best performance for your specific credit card fraud prediction model.

Conclusion:

By identifying and addressing collinearity through feature selection, dimensionality reduction, or leveraging regularization techniques, you can significantly improve the stability, interpretability, and generalizability of your logistic regression model for credit card fraud prediction.

Can you share code for L1 Regularization for Logistic Regression?

Certainly! Here's a Python code example using scikit-learn to implement L1 regularization for logistic regression:

Pythonfrom sklearn.linear_model import LogisticRegression

# Sample data (assuming features in X and target variable in y)

X = ...

y = ...

# Create L1 regularized logistic regression model

l1_model = LogisticRegression(penalty='l1', solver='liblinear') # Adjust solver as needed

# Set regularization parameter (C)

l1_model.set_params(C=1.0) # Adjust C based on hyperparameter tuning

# Train the model

l1_model.fit(X, y)

# Make predictions

predictions = l1_model.predict(X_new) # X_new is unseen data

Use code with caution. Explanation:

- Import Library: We import

LogisticRegression from sklearn.linear_model.

- Sample Data: Replace

X and y with your actual features and target variable for credit card fraud prediction (1 for fraud, 0 for legitimate transaction).

- Create L1 Model:

- We instantiate the

LogisticRegression model.

penalty='l1' specifies L1 regularization.solver='liblinear' is a suitable solver for L1-regularized logistic regression, especially for smaller datasets. Adjust the solver based on your dataset size and computational resources.

- Set Regularization Parameter (C):

- The

C parameter controls the strength of the regularization. A higher C leads to less shrinkage of coefficients, while a lower C results in more shrinkage and potentially sparser models with fewer features. You'll typically need to tune this parameter using techniques like grid search or randomized search. Here, we set C=1.0 as a starting point for illustration.

- Train the Model: Train the L1-regularized model using

l1_model.fit(X, y).

- Make Predictions: Use the trained model to predict credit card fraud for new data points in

X_new.

Note:

- Remember to tune the

C parameter using techniques like grid search or randomized search to find the optimal value for your specific dataset and desired model complexity.

- Libraries like scikit-learn provide functionalities for hyperparameter tuning, such as

GridSearchCV or RandomizedSearchCV.

This code snippet demonstrates the basic implementation of L1 regularization for logistic regression. Adapt it to your specific data and consider hyperparameter tuning for the best results.

Addressing Non-linear Relationships in Housing Price Prediction with Logistic Regression

Logistic regression assumes a linear relationship between features and the log odds of the target variable (price in this case). However, housing prices might not have a perfectly linear relationship with features like square footage or number of bedrooms. Here's how to approach this scenario:

Exploring Non-linearity:

- Scatter Plots: Visualize the relationships between features (square footage, bedrooms) and price using scatter plots. Look for non-linear patterns like curves, bends, or clusters.

- Feature Engineering: Consider creating new features that capture non-linear relationships. For example, create features like square footage squared or a categorical variable for bedroom count ranges (e.g., "1-2 bedrooms," "3-4 bedrooms"). These new features can be incorporated into the logistic regression model.

Limitations of Logistic Regression:

Logistic regression is powerful for linear relationships but struggles to capture complex non-linear patterns. This can lead to inaccurate predictions, particularly for houses with extreme values in features like square footage.

Alternative Modeling Techniques:

Here are some alternative techniques that can handle non-linear relationships:

- Decision Trees: These models can automatically capture non-linearity by splitting the data based on feature values. They are interpretable and work well with various data types.

- Random Forests: Combining multiple decision trees improves accuracy and reduces overfitting. They can handle non-linearity effectively.

- Support Vector Machines (SVMs): SVMs can be used for classification (price categories) or regression (price prediction) with non-linear kernels. However, they can be less interpretable compared to decision trees.

- Neural Networks: Deep neural networks excel at capturing complex non-linear relationships but require larger datasets for training and can be less interpretable as "black boxes."

Model Selection and Evaluation:

- Train logistic regression with and without feature engineering (if applicable).

- Train alternative models like decision trees, random forests, SVMs (with appropriate kernels), or even neural networks (if data size allows).

- Evaluate all models on a held-out test set using metrics like mean squared error or R-squared for regression tasks.

- Choose the model with the best performance on the unseen test set, considering factors like accuracy, interpretability (if needed), and computational complexity.

Additional Considerations:

- Data size: Some techniques like neural networks require large datasets for effective training.

- Interpretability: If understanding the factors influencing price is crucial, decision trees or random forests might be preferable to less interpretable models like SVMs or neural networks.

By exploring different approaches and evaluating their performance, you can select a model that effectively captures both linear and non-linear relationships in housing price prediction, potentially leading to more accurate forecasts.

Solve this:

Concept Drift and Model Monitoring: You've trained a logistic regression model to predict spam emails. However, email spam tactics evolve over time. How would you monitor your logistic regression model's performance over time and identify potential concept drift (changes in the underlying data distribution)? Discuss strategies for adapting the model when concept drift is detected.

Monitoring Logistic Regression for Spam Detection and Handling Concept Drift

Spam email tactics are constantly evolving, requiring your logistic regression model for spam detection to adapt. Here's how to monitor your model's performance and handle concept drift:

Monitoring Techniques:

-

Performance Metrics: Monitor key metrics like accuracy, precision, recall, and F1-score over time on a held-out test set that isn't used for training. A sudden drop in performance could indicate concept drift.

-

Error Analysis: Regularly analyze misclassified emails. Are there new spam patterns the model struggles with? This can provide insights into the nature of concept drift.

-

Distribution Analysis: Track the distribution of features in incoming emails. Shifts in distributions (e.g., word frequency, sender information) might signal changes in spam tactics.

-

Alerting System: Set up automated alerts that trigger when performance metrics fall below a predefined threshold. This helps identify potential concept drift quickly.

Adapting the Model to Concept Drift:

-

Retraining: Regularly retrain the model with new data that reflects the latest spam trends. This incorporates the evolving spam patterns into the model's knowledge.

-

Online Learning: If retraining frequently becomes cumbersome, consider online learning algorithms that can update the model incrementally with new data points.

-

Concept Drift Detection Techniques: Explore techniques like Early Drift Detection (EDD) or Cumulative Sum (CUSUM) algorithms that statistically detect changes in data distribution. These can be used to trigger retraining or adaptation strategies.

-

Ensemble Techniques: Consider using ensemble methods like bagging or boosting. These combine multiple logistic regression models trained on slightly different subsets of data. They can be more robust to concept drift than a single model.

Additional Considerations:

- Data Labeling: Ensure the data used for retraining is accurately labeled, especially new spam patterns, to maintain model effectiveness.

- Retraining Frequency: Balance the cost of retraining with the need to adapt to concept drift. Consider a balance between continuous monitoring and periodic retraining based on alert thresholds.

- Model Complexity: A simpler logistic regression model might be more adaptable to concept drift compared to a highly complex model.

Remember: There's no one-size-fits-all solution to concept drift. Experiment with different monitoring and adaptation techniques to find the most effective approach for your specific spam detection scenario. By proactively monitoring and adapting your logistic regression model, you can ensure it remains effective in the ever-evolving world of email spam.

What are some common scenarios encountered in Machine Learning projects involving Logistic Regression?

Here are some common scenarios encountered in machine learning projects, particularly those involving logistic regression:

Complex Scenario-Based Questions for Logistic Regression:

-

Imbalanced Class Distribution:

- Scenario: You're building a logistic regression model to predict loan defaults, but the historical data shows a significant imbalance, with far more successful repayments compared to defaults.

- Solution:

- Apply cost-sensitive learning to assign higher weights to misclassifying loan defaults (minority class) during training.

- Use class resampling techniques like SMOTE (Synthetic Minority Oversampling Technique) to oversample the minority class and balance the training set.

- Consider under-sampling the majority class (with caution) as a last resort. However, this discards potentially valuable data and might reduce model performance.

- Evaluate using metrics that consider both classes, such as F1-score and ROC-AUC curve, rather than just accuracy.

-

Feature Selection and Model Interpretability:

- Scenario: You've trained a logistic regression model to predict customer churn for a telecommunications company. While it achieves good accuracy, it contains many features, making it difficult to understand which factors contribute most to churn.

- Solution:

- Employ feature selection techniques like filter methods (chi-square tests, information gain), wrapper methods (recursive feature elimination), or embedded methods (L1-regularized logistic regression) to identify features most relevant to churn.

- Maintain good predictive power by comparing the performance of the reduced feature set to the original model.

- Leverage domain knowledge to prioritize features intuitively linked to churn and discard irrelevant ones.

- Utilize coefficient analysis to understand the influence of individual features on churn predictions. However, be cautious as coefficients don't always represent direct causal relationships.

- Consider partial dependence plots (PDPs) to visualize the effect of features on churn probability.

-

Collinearity and Regularization:

- Scenario: You're developing a logistic regression model to predict credit card fraud, but some features might be highly correlated (e.g., transaction amount and purchase location).

- Solution:

- Identify collinearity using techniques like correlation matrices or visualization (heatmaps, scatter plots).

- Address collinearity by:

- Removing redundant features using feature selection

- Employing dimensionality reduction techniques like Principal Component Analysis (PCA)

- Implement regularization (L1 or L2):

- L1 regularization (LASSO) sets coefficients to zero, potentially removing highly correlated features.

- L2 regularization (Ridge) shrinks coefficients but retains features.

- Choose the optimal regularization technique (L1 or L2) based on factors like data and desired model characteristics, considering interpretability vs. generalizability and overfitting.

-

Non-linear Relationships and Model Selection:

- Scenario: You're building a logistic regression model to predict housing prices based on features like square footage and number of bedrooms, but you suspect the relationships might not be perfectly linear.

- Solution:

- Explore non-linearity with scatter plots or by creating new features that capture non-linear connections (e.g., square footage squared, categorical bedroom count ranges).

- Recognize the limitations of logistic regression for handling non-linearity.

- Consider alternative models:

- Decision Trees and Random Forests: Offer flexibility for non-linear relationships and provide interpretability.

- Support Vector Machines (SVMs): Can be used with non-linear kernels, but interpretability might be lower.

- Neural Networks: Excellent for capturing non-linearity, but require larger datasets and can be "black boxes."

- Select the model through evaluation on a held-out test set using appropriate metrics like mean squared error or R-squared. Consider factors like accuracy, interpretability, and computational complexity.

Additional Considerations:

- Data size: Some techniques, like neural networks, might require larger datasets.

- Interpretability: If understanding factors influencing predictions is crucial, prioritize interpretable models like decision trees over less interpretable ones.

- Experimentation: Try various approaches and evaluate their performance to find the best fit for your dataset and problem.

By addressing these complex scenarios and their potential solutions, you can build robust and effective logistic regression models.

Tags: Technology,Machine Learning,Classification,Interview Preparation,